Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More

As the AI video wars continue to wage with new, realistic video generating models being released on a near weekly basis, early leader Runway isn’t ceding any ground in terms of capabilities.

Rather, the New York City-based startup — funded to the tune of $100M+ by Google and Nvidia, among others — is actually deploying even new features that help set it apart. Today, for instance, it launched a powerful new set of advanced AI camera controls for its Gen-3 Alpha Turbo video generation model.

Now, when users generate a new video from text prompts, uploaded images, or their own video, the user can also control how the AI generated effects and scenes play out much more granularly than with a random “roll of the dice.”

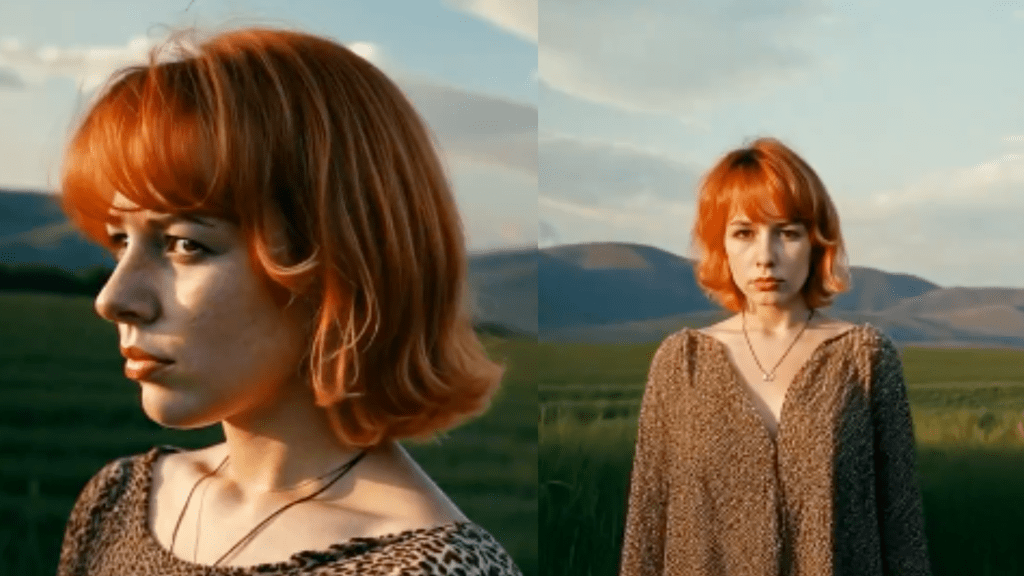

Instead, as Runway shows in a thread of example videos uploaded to its X account, the user can actually zoom in and out of their scene and subjects, preserving even the AI generated character forms and setting behind them, realistically putting them and their viewers into a fully realized, seemingly 3D world — like they are on a real movie set or on location.

As Runway CEO Crisóbal Valenzuela wrote on X, “Who said 3D?”

This is a big leap forward in capabilities. Even though other AI video generators and Runway itself previously offered camera controls, they were relatively blunt and the way in which they generated a resulting new video was often seemingly random and limited — trying to pan up or down or around a subject could sometimes deform it or turn it 2D or result in strange deformations and glitches.

What you can do with Runway’s new Gen-3 Alpha Turbo Advanced Camera Controls

The Advanced Camera Controls include options for setting both the direction and intensity of movements, providing users with nuanced capabilities to shape their visual projects. Among the highlights, creators can use horizontal movements to arc smoothly around subjects or explore locations from different vantage points, enhancing the sense of immersion and perspective.

For those looking to experiment with motion dynamics, the toolset allows for the combination of various camera moves with speed ramps.

This feature is particularly useful for generating visually engaging loops or transitions, offering greater creative potential. Users can also perform dramatic zoom-ins, navigating deeper into scenes with cinematic flair, or execute quick zoom-outs to introduce new context, shifting the narrative focus and providing audiences with a fresh perspective.

The update also includes options for slow trucking movements, which let the camera glide steadily across scenes. This provides a controlled and intentional viewing experience, ideal for emphasizing detail or building suspense. Runway’s integration of these diverse options aims to transform the way users think about digital camera work, allowing for seamless transitions and enhanced scene composition.

These capabilities are now available for creators using the Gen-3 Alpha Turbo model. To explore the full range of Advanced Camera Control features, users can visit Runway’s platform at runwayml.com.

While we haven’t yet tried the new Runway Gen-3 Alpha Turbo model, the videos showing its capabilities indicate a much higher level of precision in control and should help AI filmmakers — including those from major legacy Hollywood studios such as Lionsgate, with whom Runway recently partnered — to realize major motion picture quality scenes more quickly, affordably, and seamlessly than ever before.

Asked by VentureBeat over Direct Message on X if Runway had developed a 3D AI scene generation model — something currently being pursued by other rivals from China and the U.S. such as Midjourney — Valenzuela responded: “world models :-).”

Runway first mentioned it was building AI models designed to simulate the physical world back in December 2023, nearly a year ago, when co-founder and chief technology officer (CTO) Anastasis Germanidis posted on the Runway website about the concept, stating:

“A world model is an AI system that builds an internal representation of an environment, and uses it to simulate future events within that environment. Research in world models has so far been focused on very limited and controlled settings, either in toy simulated worlds (like those of video games) or narrow contexts (such as developing world models for driving). The aim of general world models will be to represent and simulate a wide range of situations and interactions, like those encountered in the real world.“

As evidenced in the new camera controls unveiled today, Runway is well along on its journey to build such models and deploy them to users.

Source link